Article 9 EU AI Act in Practice: How to Build a Risk Management System for High-Risk AI

- Joe Simms

- Apr 15

- 8 min read

You've run the analysis. Your AI system is classified as High-Risk. Now what?

If you've followed our KI-Verordnung Framework guide, you know the four steps to clarity:

1. Scope Check — Does the AI Act apply? ✓

2. Risk Classification — What's the risk level? ✓

3. Role Determination — Provider, Deployer, or both? ✓

4. Obligations — What must you do? ← You are here

For High-Risk AI systems, one of the most critical obligations is Article 9: the Risk Management System (RMS). In our previous article, we explained what Article 9 requires. In this article, we show you how to actually build it — with a practical example, templates, and step-by-step guidance.

Connection to the KI-VO Framework:

Article 9 is part of Step 4 (Obligations) in the compliance framework. It applies specifically to High-Risk AI systems. If your system falls under Annex III or is a safety component of regulated products, this article is for you.

→ Haven't determined your risk level yet? Start with our article: "The AI Act Framework: 4 Steps to Clarity"The Scenario: AI-Powered Recruitment Screening

Let's work with a concrete example throughout this article.

Meet "TalentMatch AI"

You're the CTO of a growing HR Tech startup. You've built an AI-powered Applicant Tracking System (ATS) called "TalentMatch AI" that:

Screens incoming CVs automatically

Ranks candidates based on job requirements

Flags "best matches" for recruiter review

Suggests interview questions based on candidate profiles

You've integrated OpenAI's API for natural language understanding and built custom ranking algorithms on top.

Why Is This High-Risk?

Under the AI Act, AI systems used in employment, workers management, and access to self-employment are explicitly listed as High-Risk in Annex III.

Specifically, your system falls under:

Annex III, Point 4(a):

"AI systems intended to be used for the recruitment or selection of natural persons, in particular to place targeted job advertisements, to analyse and filter job applications, and to evaluate candidates."This means:

Article 9 (Risk Management System) is mandatory

You need documentation, testing, and ongoing monitoring

Non-compliance can result in significant penalties

Let's build your RMS step by step.

Step 1: Risk Identification

The first step is identifying what could go wrong — both the obvious risks and the ones you might not have considered.

Known Risks for Recruitment AI

Based on existing research and documented cases, recruitment AI systems face several well-known risks:

Risk Category | Specific Risk | Impact |

Discrimination | Gender bias in ranking (e.g., penalising career gaps) | Fundamental rights violation |

Discrimination | Age bias through proxy variables (graduation year, technologies listed) | Fundamental rights violation |

Discrimination | Ethnic bias from name or location data | Fundamental rights violation |

Data Privacy | Excessive data retention | GDPR violation |

Data Privacy | Processing special category data (disabilities, health) | GDPR + AI Act violation |

Transparency | Candidates don't know AI is screening them | Article 50 violation |

Accuracy | False rejections of qualified candidates | Individual harm, business impact |

Emerging Risks to Consider

These are risks that may not be obvious initially but can emerge over time:

Emerging Risk | How It Might Occur |

Model drift | After 6 months, the model starts favouring certain patterns that weren't in the training data |

Adversarial gaming | Candidates learn to "game" the system with keyword stuffing |

Feedback loops | If only AI-selected candidates get hired, the model reinforces its own biases |

Context shift | System trained for tech roles gets used for executive hiring without re-validation |

Using TrustTroiAI for Risk Identification

In TrustTroiAI, Finn (our Knowledge & Situation Assistant) helps you identify risks specific to your system:

Describe your AI system's purpose

Finn prompts you with relevant risk categories based on your context

You confirm, add, or modify identified risks

All risks are logged in your Risk Register

Step 2: Risk Assessment & Prioritisation

Not all risks are equal. Article 9 requires you to prioritise based on severity and probability.

The Risk Assessment Matrix

For each identified risk, assess:

Severity: How harmful is the impact if this risk materialises? (Low / Medium / High / Critical)

Probability: How likely is this to occur? (Rare / Possible / Likely / Almost Certain)

Example placements:

- Critical/Likely: Gender bias in ranking

- High/Possible: Model drift

- Medium/Likely: Keyword gaming

- High/Rare: Data breach

Prioritisation for TalentMatch AI

Based on our assessment:

Priority | Risk | Severity | Probability | Action |

1 | Gender/age bias in ranking | Critical | Likely | Immediate mitigation required |

2 | Lack of transparency to candidates | High | Almost Certain | Address before deployment |

3 | Model drift over time | High | Possible | Build monitoring from day 1 |

4 | Excessive data retention | Medium | Likely | Implement data lifecycle policy |

5 | Adversarial gaming | Medium | Possible | Monitor and adjust |

The principle: address the most severe and probable risks first.

Step 3: Mitigation Measures

Now we apply the mitigation hierarchy established by Article 9. Remember: elimination through design takes precedence over instructions.

Applying the Hierarchy to TalentMatch AI

Level 1: Eliminate by Design

What we can remove entirely:

Remove candidate names, photos, and addresses from initial screening input

Remove graduation year (age proxy) from ranking algorithm

Don't process special category data at all

Level 2: Technical Safeguards

What we can detect and prevent:

Implement bias detection dashboard tracking selection rates by demographic groups

Add demographic parity alerts when disparities exceed thresholds

Build confidence scores with automatic human escalation for borderline cases

Implement model monitoring for drift detection

Level 3: Organisational Measures

Human oversight and processes:

Mandatory human review for all rejections (no fully automated decisions with legal effect)

Quarterly bias audits by independent team

Clear escalation path for candidate complaints

Regular model re-validation schedule

Level 4: User Instructions

Guidance for deployers (companies using your ATS):

Clear documentation on intended use and limitations

Training requirements for HR staff using the system

Guidelines on combining AI recommendations with human judgment

Instructions for handling candidate inquiries about AI use

Level 5: Residual Risk Documentation

What remains after all measures:

Document that some false positives/negatives will occur

Justify acceptance based on: (1) state of the art, (2) human oversight in place, (3) continuous monitoring

Inform deployers of residual risks in system documentation

Step 4: Documentation

Documentation isn't bureaucracy — it's your evidence of compliance and your protection in case of audits or incidents.

What to Document

Article 9 requires documentation of:

Document | Content | Update Frequency |

Risk Register | All identified risks with assessments | Ongoing (as risks emerge) |

Mitigation Plan | Measures for each risk, mapped to hierarchy | After each risk assessment |

Residual Risk Justification | Why remaining risks are acceptable | After mitigation implementation |

Testing Records | Test procedures, metrics, results | Before deployment + after updates |

Monitoring Plan | What you'll track post-deployment | Before deployment |

Incident Log | Any issues discovered and how they were addressed | Ongoing |

Connection to Article 17 (Quality Management System)

Your RMS documentation feeds into your broader Quality Management System. The QMS provides the organisational structure; the RMS provides the risk-specific content.

Think of it as:

QMS = How your organisation ensures quality and compliance (processes, responsibilities, audits)

RMS = What specific risks exist and how you address them (content)

Step 5: Post-Market Monitoring Plan

Article 9 requires continuous monitoring after deployment. Here's what to set up:

Metrics to Track for TalentMatch AI

Metric | What It Measures | Alert Threshold |

Selection rate by gender | Gender bias | >10% disparity |

Selection rate by age group | Age bias | >15% disparity |

False rejection rate | Accuracy | >5% (validated sample) |

Model confidence distribution | Drift indicator | Shift in distribution |

Candidate complaints | User experience | Any mention of unfairness |

System override rate | Human oversight effectiveness | <20% or >80% (both concerning) |

Feedback Loops

Build mechanisms to learn from deployment:

Recruiter feedback on AI recommendations (accurate? helpful?)

Hiring outcome tracking (did AI-selected candidates succeed?)

Candidate feedback surveys

Regular comparison of AI rankings vs. human rankings

Emerging Risk Detection

Schedule quarterly reviews to ask:

Are there new patterns in the data we didn't anticipate?

Have user behaviours changed (gaming, workarounds)?

Has the job market shifted in ways that affect our model?

Are there new research findings on recruitment AI risks?

Step 6: Testing & Validation

Before deployment and after significant updates, you need to test against predefined metrics.

Testing Protocol for TalentMatch AI

Test Type | What You Test | Metrics | When |

Bias testing | Demographic parity | Selection rates across groups | Pre-deployment + quarterly |

Accuracy testing | Prediction quality | False positive/negative rates | Pre-deployment + after updates |

Robustness testing | Adversarial inputs | Performance on edge cases | Pre-deployment |

Real-world validation | Actual hiring outcomes | Correlation with job success | 6 months post-deployment |

Documentation for Article 60

If you conduct real-world testing (testing with actual candidates before full deployment), you need to comply with Article 60 requirements:

Informed consent from test participants

Safety measures and human oversight

Clear test boundaries and duration

Documentation of results and any issues

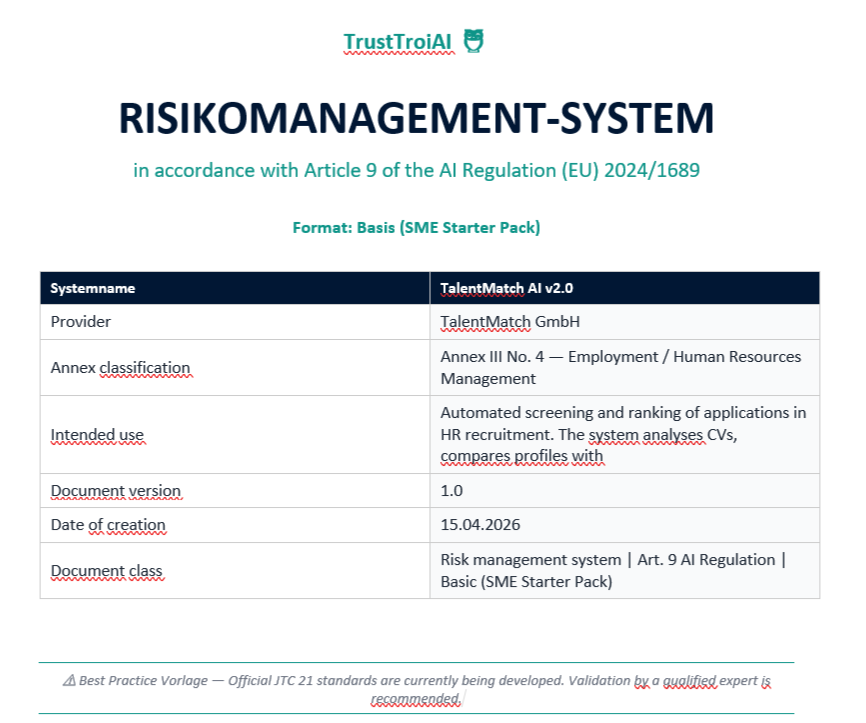

The TrustTroiAI Article 9 Risk Management System Templates

Building an RMS from scratch is complex. That's why we've created templates specifically for Article 9 compliance.

What's Included

1. Risk Register Template

Pre-structured categories for High-Risk AI

Fields for severity, probability, and prioritisation

Mapping to fundamental rights impacts

2. Mitigation Plan Template

Organised by the 5-level hierarchy

Links each measure to specific risks

Tracks implementation status

3. RMS Documentation Template

Complete structure aligned with Article 9 requirements

Guidance text for each section

Ready for QMS integration

4. Post-Market Monitoring Checklist

Metrics to track by system type

Alert threshold recommendations

Review schedule templates

How It Works

1. Start with Scope Check: Confirm your system is High-Risk

2. Guided Risk Identification: Finn prompts you with relevant risk categories

3. Fill the Templates: Enter your specific risks, assessments, and measures

4. Generate Documentation: Export a complete DOCX ready for your records

Finn: Your RMS Assistant

Have questions while filling out the templates?

"Is this risk severity assessment correct for my context?"

"What technical safeguards are recommended for recruitment AI?"

"How should I document this residual risk?"

Finn knows your specific system and provides context-aware answers.

Bruno: Expert Validation

For High-Risk systems, the stakes are high. When you need certainty:

Submit your completed RMS for expert review

Get written feedback from qualified compliance experts

Have documented validation for audits and regulators

Common Mistakes to Avoid

After helping many teams build their RMS, we've seen patterns in what goes wrong:

Mistake 1: Treating It as a One-Time Exercise

Wrong: "We did our risk assessment before launch. Done."

Right:Risk management is continuous. Schedule quarterly reviews at minimum.

Mistake 2: Ignoring Emerging Risks

Wrong: "We documented the known risks. That's what the law requires."

Right: Article 9 explicitly requires you to consider emerging risks. Build detection mechanisms.

Mistake 3: Skipping Documentation

Wrong: "We mitigated the risks. Why do we need to write it down?"

Right: Without documentation, you have no evidence of compliance. Document everything.

Mistake 4: No Real Testing

Wrong: "It works in our test environment. Ship it."

Right: Article 9 requires testing against predefined metrics. Validate with real-world data.

Mistake 5: RMS in Isolation

Wrong: "Our data scientist manages the RMS. It's separate from our QMS."

Right: RMS and QMS must be structurally linked. Integrate them.

Your RMS Checklist

Before you consider your Article 9 Risk Management System complete, verify:

Risk Identification

□ All known risks documented

□ Emerging risks considered

□ Risks mapped to fundamental rights impacts

Risk Assessment

□ Severity and probability assessed for each risk

□ Prioritisation completed

□ Assessment methodology documented

Mitigation

□ Measures applied following the hierarchy

□ Each risk has assigned mitigation measures

□ Residual risks justified and documented

Documentation

□ Risk Register complete

□ Mitigation Plan documented

□ Testing records available

□ Linked to QMS

Monitoring

□ Post-market monitoring plan in place

□ Metrics and thresholds defined

□ Review schedule established

Conclusion: From Obligation to Advantage

Building a Risk Management System for your High-Risk AI isn't just about compliance. It's about:

Building trust with customers who want to know their AI tools are safe

Protecting your users — the candidates whose careers are affected by your system

Reducing liability by documenting your due diligence

Future-proofing as AI regulation becomes the global norm

For TalentMatch AI — and for your High-Risk AI system — Article 9 compliance is achievable. With the right framework, templates, and guidance, you can build an RMS that protects people and positions your company as a responsible AI provider.

Ready to Build Your RMS?

Start with a free Scope Check to confirm your obligations, then access our Article 9 templates.

→ trusttroiai.com/scope-checkDiscover the TrustTroiAI Universe:

Meet Troi, Finn, Bruno, and the other characters that guide you through AI compliance.

Source

[1] Regulation (EU) 2024/1689 — Artificial Intelligence Act

Article 9 (Risk Management System)

Article 17 (Quality Management System)

Article 60 (Testing in Real-World Conditions)

Annex III, Point 4(a) (Employment and Workers Management)

[3] The Academic Guide to AI Act Compliance

hal-05365570v1

[4] CEN-CENELEC JTC 21

Joint Technical Committee on Artificial Intelligence

Developing harmonised standards for AI Act compliance

[5] TrustTroiAI

"The AI Act Framework: 4 Steps to Clarity"

Comments