top of page

Article 11 in Practice: How to Build Technical Documentation for High-Risk AI

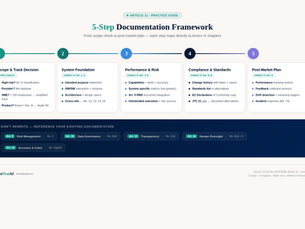

In our previous article, we explained what Article 11 requires — the 9 chapters of Annex IV, the SME track, and how the technical documentation connects to almost every other obligation in the AI Act. Now the question is: how do you actually build it? Theory is useful. But when you sit down to create your technical documentation, you need a concrete process — not just a list of requirements. In this article, we walk through the entire documentation process step by step, using

cici BEL

Apr 2912 min read

Article 9 EU AI Act in Practice: How to Build a Risk Management System for High-Risk AI

You've run the analysis. Your AI system is classified as High-Risk . Now what? If you've followed our KI-Verordnung Framework guide, you know the four steps to clarity: 1. Scope Check — Does the AI Act apply? ✓ 2. Risk Classification — What's the risk level? ✓ 3. Role Determination — Provider, Deployer, or both? ✓ 4. Obligations — What must you do? ← You are here For High-Risk AI systems, one of the most critical obligations is Article 9: the Risk Management System (RMS) . In

Joe Simms

Apr 158 min read

Article 9 EU AI Act: The Risk Management System Explained

180 pages. 113 articles. And if you're building a High-Risk AI system, Article 9 might be the most important one you need to understand. Why? Because Article 9 doesn't ask you to tick a box once. It requires you to build and maintain a Risk Management System (RMS) — a continuous, adaptive process that spans the entire lifecycle of your AI system. If Article 50 is about transparency (telling people they're interacting with AI), Article 9 is about responsibility: systematicall

jimsigne

Apr 78 min read

bottom of page