Article 11 in Practice: How to Build Technical Documentation for High-Risk AI

- cici BEL

- Apr 29

- 12 min read

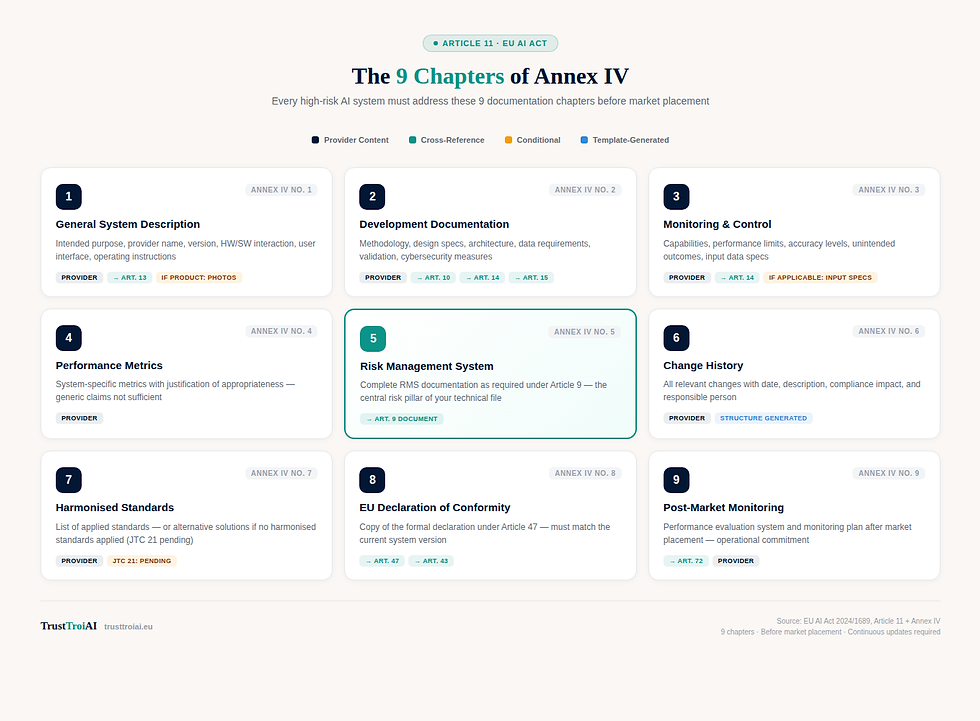

In our previous article, we explained what Article 11 requires — the 9 chapters of Annex IV, the SME track, and how the technical documentation connects to almost every other obligation in the AI Act.

Now the question is: how do you actually build it?

Theory is useful. But when you sit down to create your technical documentation, you need a concrete process — not just a list of requirements. In this article, we walk through the entire documentation process step by step, using a practical example you already know from our Article 9 and Article 10 guides: TalentMatch AI.

This is Part 2 of our Article 11 series.

If you haven't read the theory yet, start with: "Article 11 EU AI Act: Technical Documentation Explained"

Already completed your Article 9 risk management documentation? Good — you'll need it for Annex IV Chapter 5.

The Scenario: Building Documentation for TalentMatch AI

You are the CTO of a growing HR Tech startup. Your product — TalentMatch AI — is an AI-powered Applicant Tracking System that screens incoming CVs, ranks candidates based on job requirements, flags best matches for recruiter review, and suggests interview questions based on candidate profiles. It uses OpenAI's API for natural language understanding and custom ranking algorithms trained on 50,000 historical applications.

Under the AI Act, TalentMatch AI is classified as high-risk under Annex III, Point 4(a): AI systems used for recruitment and selection of natural persons. You have already completed your Article 9 risk management system and your Article 10 data governance documentation. Now it is time to assemble the complete technical file under Article 11.

Your company has 35 employees — you qualify as an SME.

The 5-Step Documentation Framework

Building Annex IV documentation does not mean starting from scratch for every chapter. If you have been following the AI Act systematically — Article 9 first, then Article 10, then Article 14 — you already have significant portions of your technical file ready.

Here is the framework:

Step 1: Scope & Track Decision — Confirm high-risk classification, choose SME or full track

Step 2: System Foundation— Annex IV No. 1–2 (system description + development documentation)

Step 3: Performance & Risk— Annex IV No. 3–5 (monitoring, metrics, risk management)

Step 4: Compliance & Standards — Annex IV No. 6–8 (change history, standards, conformity declaration)

Step 5: Post-Market Plan — Annex IV No. 9 (post-market monitoring system)

Each step maps directly to specific Annex IV chapters. Let's walk through them with TalentMatch AI.

Step 1: Scope & Track Decision

Before writing a single word of documentation, you need to answer three questions:

Is TalentMatch AI high-risk? Yes. AI systems for recruitment and candidate evaluation are explicitly listed in Annex III, Point 4(a). This was confirmed during the Article 9 risk assessment.

Are we the provider? Yes. Your company developed TalentMatch AI and places it on the EU market. You are not a deployer using someone else's system — you built it.

Do we qualify for the SME track? Yes. With 35 employees, you fall under the SME threshold (< 250 employees). You can use the simplified documentation format once the European Commission publishes it.

Practical decision for TalentMatch AI:

Since the SME form is not yet available (as of April 2026), we build a full Annex IV structure but at reduced detail depth — matching the SME intent. When the official form is released, we can adapt. The key principle: all 9 chapters must be addressed, but the level of technical detail can be proportionate to the company size.One additional check: Is TalentMatch AI integrated into a product under Annex I Section A (like a medical device or machinery)? No — it is a standalone software system. So the single-file requirement of Article 11(2) does not apply here. TalentMatch AI follows the standard Annex IV documentation path.

Step 2: System Foundation (Annex IV No. 1–2)

The first two chapters form the foundation of your technical file. They answer the questions: what is this system, and how was it built?

Writing the Intended Purpose

The intended purpose is arguably the most important sentence in your entire technical documentation. Everything else — risk assessment, performance metrics, human oversight — derives from this statement.

For TalentMatch AI, a good intended purpose looks like this:

TalentMatch AI — Intended Purpose:

"AI-powered applicant tracking system for automated screening and ranking of job applications, intended for use by trained HR professionals in the recruitment process. The system analyses CV content against job requirement profiles and produces ranked candidate shortlists for human review. It does not make autonomous hiring decisions."Notice what this statement includes: the function (screening and ranking), the user (trained HR professionals), the scope (recruitment process), and critically — the boundary (no autonomous decisions). This boundary directly connects to your Article 14 human oversight documentation.

Completing the System Description

Beyond the intended purpose, Annex IV No. 1 requires seven more elements. For TalentMatch AI:

Hardware/software interaction: TalentMatch AI operates as a cloud-based SaaS application. It integrates with the OpenAI API for natural language processing, a PostgreSQL database for candidate data, and standard web browsers for the user interface. No specialised hardware is required.

Software versions: Current version v3.2. Uses OpenAI GPT-4o API (version pinned), Python 3.11 backend, React 18 frontend. Update policy: quarterly feature releases, monthly security patches.

Forms of market placement: SaaS subscription model, accessed via web application. No on-premise deployment option.

Hardware requirements: Modern web browser (Chrome 90+, Firefox 88+, Edge 90+). Minimum 4 Mbps internet connection.

User interface: Web-based dashboard with candidate list view, individual candidate profiles, ranking explanations, and interview question suggestions.

Operating instructions: Reference to the Article 13 transparency and information document (separate deliverable).

Photos or illustrations are not required — TalentMatch AI is not integrated into a physical product.

Development Documentation

Annex IV No. 2 is where the technical depth increases. For TalentMatch AI, this chapter must cover:

Development methodology: Agile development with 2-week sprints. ML model development follows a structured pipeline: data collection → preprocessing → feature engineering → model training → validation → deployment.

Design specifications: Two-stage ranking algorithm. Stage 1: NLU-based CV parsing using OpenAI API. Stage 2: Custom gradient-boosted ranking model trained on historical hiring outcomes. Key design decision: human-in-the-loop architecture — the system recommends, HR professionals decide.

System architecture: Three-tier architecture (presentation, application, data). Application layer handles API orchestration, model inference, and result aggregation. Documented as architecture diagram with data flow.

Four elements in this chapter connect directly to existing documentation:

→ Data requirements — reference your Article 10 data governance document (datasheets for 50,000 applications, 2,000 performance records, 500 job descriptions)

→ Human oversight assessment — reference your Article 14 documentation

→ Validation and testing — reference your Article 9 RMS testing procedures and Article 15 accuracy documentation

→ Cybersecurity measures — reference your Article 15 cybersecurity documentation

This is where the cross-reference architecture saves significant effort. You do not rewrite your Article 10 data governance documentation — you reference it and note the connection.

Step 3: Performance & Risk (Annex IV No. 3–5)

These three chapters cover how the system performs, how you measure that performance, and how you manage the risks.

Capabilities and Limitations

Annex IV No. 3 requires honest documentation of what TalentMatch AI can and cannot do:

Capabilities: Screens up to 500 applications per job posting. Produces ranked shortlists of top 10–20 candidates. Provides explainable ranking factors for each candidate. Supports 12 languages for CV parsing.

Performance limits: Accuracy degrades for CVs with non-standard formatting (handwritten, image-based, or heavily designed CVs). Language support is strongest for English and German — accuracy drops for less common languages. The system has not been validated for executive-level positions (C-suite and board appointments).

Foreseeable unintended outcomes: Risk of proxy discrimination through correlated variables (university prestige correlating with socioeconomic background). Risk of over-reliance by HR professionals on AI rankings. Risk of performance degradation over time if job market patterns shift.

This connects directly to the risk identification work from your Article 9 documentation. If you identified these risks in your RMS, reference that analysis here.

Choosing the Right Metrics

Annex IV No. 4 requires that your performance metrics are appropriate for the specific system. Generic metrics are not sufficient.

For TalentMatch AI, appropriate metrics include:

Ranking quality: Normalised Discounted Cumulative Gain (NDCG@10) — measures whether the best candidates appear at the top of the ranked list.

Fairness metrics: Demographic parity ratio and equalised odds across protected characteristics (gender, age, ethnicity) — critical for a recruitment system under Annex III.

Consistency: Test-retest reliability — the same CV submitted twice should produce the same ranking position (±2 positions tolerance).

Why these metrics? A generic accuracy percentage (e.g. "92% accurate") would be meaningless for TalentMatch AI. What does "accurate" mean for a ranking system? NDCG measures ranking quality specifically. Fairness metrics are not optional for an employment AI system — they are directly implied by the risk profile under Annex III.

Connecting Your Article 9 Work

Annex IV No. 5 requires a detailed description of the risk management system under Article 9. If you followed our Article 9 guide, you already have this document. For TalentMatch AI, the RMS documentation covers:

→ The three-pillar lifecycle approach (identify → mitigate → monitor)

→ Known risks (proxy discrimination, over-reliance, data drift) and emerging risks

→ The five-level mitigation hierarchy from elimination to monitoring

→ Residual risk assessment for each identified risk

This chapter does not require new work — it references or includes the Article 9 document as a component of the technical file.

Step 4: Compliance & Standards (Annex IV No. 6–8)

These three chapters handle the formal compliance infrastructure around your system.

The Change Log That Regulators Want

Annex IV No. 6 requires a documented change history — but not the kind developers are used to. This is not a Git commit log. It is a compliance record that tracks every change relevant to the system's conformity status.

For TalentMatch AI, a proper change record looks like this:

Date | Change Description | Impact on Compliance | Responsible |

2025-09-15 | Updated OpenAI API from GPT-4 to GPT-4o | NLU component changed — revalidation of ranking accuracy required (Annex IV No. 3, 4)y | CTO |

2025-11-02 | Added Portuguese language support | Extended capability — updated system description (Annex IV No. 1) and performance limits (No. 3) | ML Lead |

2026-01-20 | Retrained ranking model with Q4 2025 application data | Training data changed — updated data governance documentation (Art. 10) and fairness metrics (No. 4) | Data Team |

2026-03-1 | Updated bias mitigation algorithm v2.1 | Risk mitigation measures changed — updated RMS documentation (Art. 9 / Annex IV No. 5) | ML Lead |

Each entry identifies the date, what changed, which Annex IV sections are affected, and who is responsible. This is the level of traceability that authorities and notified bodies expect.

Standards: What to Do Right Now

Annex IV No. 7 requires a list of applied harmonised standards. For TalentMatch AI in April 2026, the honest answer is: the final AI Act harmonised standards from CEN-CENELEC JTC 21 have not been published yet.

This does not mean the chapter stays empty. You document the alternative solutions you apply:

→ IEEE 7003-2024 — Standard for Algorithmic Bias Considerations (applied to fairness metrics and bias mitigation)

→ ISO/IEC 42001:2023 — AI Management System (applied to organisational AI governance)

→ ISO/IEC 25012:2008 — Data Quality Model (applied to training data quality assessment)

When JTC 21 standards are published, this chapter gets updated with the formal harmonised standards and a mapping of how your existing approach aligns.

EU Declaration of Conformity

Annex IV No. 8 requires a copy of the EU Declaration of Conformity under Article 47. For TalentMatch AI, this is prepared as part of the conformity assessment process under Article 43. The declaration must match the documented system version — if you update the system, the declaration must be updated accordingly.

Step 5: Post-Market Plan (Annex IV No. 9)

The final chapter is forward-looking: how will you monitor TalentMatch AI after it is deployed?

Annex IV No. 9 requires a detailed description of the post-market monitoring system under Article 72. For TalentMatch AI, this plan covers four elements:

Performance tracking: Monthly monitoring of NDCG@10 and fairness metrics across all active deployments. Automated alerts when metrics deviate more than 5% from baseline values.

Feedback collection: Structured feedback loop from HR professionals using the system. Quarterly surveys on ranking quality, false positive rates, and user confidence. Deployer-reported incidents tracked in a centralised log.

Drift detection: Monitoring for data drift (changes in application patterns, job market shifts) and model drift (gradual degradation of ranking accuracy). Retraining triggered when drift exceeds defined thresholds.

Incident response: Defined process for handling serious incidents — from initial detection through root cause analysis to corrective action and documentation update. Reporting obligations under Article 73 (serious incidents) integrated into the process.

The post-market monitoring plan is a living document. It does not just describe what you will do — it defines the triggers, thresholds, and responsibilities for ongoing compliance.

Common Mistakes implementing article 11 technical documentation practice

Four mistakes appear repeatedly when teams build their Article 11 documentation:

Mistake 1: Rewriting Everything

The biggest time waste is treating every Annex IV chapter as a standalone document. Chapters 2(d), 2(e), 2(g), 2(h), 3, and 5 all reference existing Article documentation. For TalentMatch AI, the Article 9 RMS, Article 10 data governance, Article 14 human oversight, and Article 15 accuracy documentation already exist. Annex IV assembles them — it does not duplicate them. Use cross-references. Write once, reference everywhere.

Mistake 2: Generic Performance Metrics

Stating "92% accuracy" without context is meaningless. For TalentMatch AI, NDCG@10 measures ranking quality, demographic parity measures fairness, and test-retest reliability measures consistency. Each metric must be justified as appropriate for the specific system and its intended purpose. Annex IV No. 4 explicitly requires this justification.

Mistake 3: Forgetting the Change History

Many teams document the current state of the system but not how it evolved. Annex IV No. 6 requires a traceable record of all relevant changes — with dates, descriptions, compliance impact, and responsible persons. Start this log from day one. Reconstructing a change history retroactively is painful and unreliable.

Mistake 4: Post-Market as an Afterthought

The monitoring plan (Annex IV No. 9) is not a checkbox — it is an operational commitment. For TalentMatch AI, this means defined metrics, thresholds, alert mechanisms, and response processes. A plan that says "we will monitor performance" without specifying how, when, and who is not compliant.

How TrustTroiAI Helps

TrustTroiAI's Article 11 template is designed around the exact challenge we just walked through: turning Annex IV requirements into a structured, completable document without starting from a blank page.

The process begins before you write a single line. When you open the Article 11 template, Diana (our regulatory library) checks your prerequisites automatically. If you have already completed your Article 9 Risk Management and Article 10 Data Governance documentation for the same system, Diana detects them and adopts the relevant data — system name, purpose, annex classification — directly into the Article 11 workflow. No duplicate data entry.

The compliance journey on the left side shows exactly where you stand: Article 9 completed, Article 11 in progress, Articles 13 and 47 still ahead. This is not just a progress bar — it reflects the actual regulatory dependency chain.

The guided questionnaire then walks you through the system context decisions step by step. Each question maps directly to a specific Annex IV requirement: Is your system standalone or integrated into a product? Does it use machine learning? Is it subject to other EU harmonisation laws? Your answers shape the structure of the generated document — conditional sections appear or disappear based on what applies to your system.

Cross-references are built in automatically. When the questionnaire detects that your system uses ML, it surfaces the Article 10 Data Governance connection and reminds you that datasheets (Annex IV Chapter 3.4) should be referenced from your existing Art. 10 documentation.

Based on your answers, the template generates a structured DOCX document that maps directly to Annex IV numbering. The cover page includes all system metadata — system name, provider, annex classification, document version, creation date, and the applicable documentation track (SME Track or Full Track). The document control section provides a version history table with dates, change descriptions, and responsible persons — exactly the format regulators expect under Annex IV No. 6.

Every chapter is pre-structured with the required elements:

→ Sections marked "↑ Generated" are pre-filled from your questionnaire answers

→ Sections marked "→ Art. 9 / Art. 10 / Art. 13 / Art. 14 / Art. 15" are cross-reference placeholders connecting to your existing documentation

→ Sections marked "Anbieter" require your team's technical input

→ Conditional sections (like product integration photos) appear only when relevant

Once your documentation is assembled, Bruno (our expert validation layer) reviews the completeness: are all 9 chapters addressed? Are cross-references correctly linked? Are conditional sections properly handled? Bruno flags gaps before you submit.

And if you have questions along the way, Finn (our knowledge assistant) can explain specific Annex IV requirements, clarify what belongs in which chapter, and help you understand the regulatory context behind each section.

Build your Article 11 documentation with TrustTroiAI

From guided questionnaire to structured DOCX — the fastest path to Annex IV compliance.

→ Start your documentation: trusttroiai.com

→ Theory first: Article 11 EU AI Act Explained [LINK zu Blog 1]

→ Foundation: Article 9 — Risk Management System [LINK zu Art. 9 Blog]Key Takeaways

→ Article 11 documentation is not a standalone project — if you have completed Articles 9, 10, 14, and 15, large parts of Annex IV are already covered through cross-references.

→ The 5-step framework (Scope → Foundation → Performance & Risk → Compliance → Post-Market) structures the work into manageable phases mapped to Annex IV chapters.

→ Performance metrics must be system-specific — for TalentMatch AI, NDCG@10, fairness metrics, and test-retest reliability replace generic accuracy claims.

→ Start your change history from day one. Reconstructing it later is painful and the traceability that regulators expect will be missing.

→ The post-market monitoring plan is an operational commitment with defined metrics, thresholds, and response processes — not a paragraph promising to "monitor performance."

Source

PRIMÄRQUELLEN:

1. EU AI Act 2024/1689, Article 11 — Technical Documentation

2. EU AI Act 2024/1689, Annex IV — Technical Documentation Requirements

3. EU AI Act 2024/1689, Annex III, Point 4(a) — Employment AI Systems

VERWANDTE ARTIKEL:

4. EU AI Act 2024/1689, Articles 9, 10, 13, 14, 15, 17, 43, 47, 72

STANDARDS:

5. IEEE 7003-2024: Standard for Algorithmic Bias Considerations

6. ISO/IEC 42001:2023: AI Management System

7. CEN-CENELEC JTC 21 — Harmonised Standards for the AI Act (in development)

Comments