top of page

Article 11 in Practice: How to Build Technical Documentation for High-Risk AI

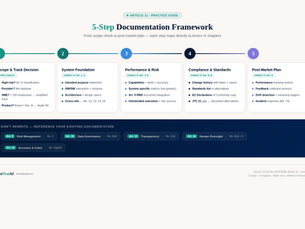

In our previous article, we explained what Article 11 requires — the 9 chapters of Annex IV, the SME track, and how the technical documentation connects to almost every other obligation in the AI Act. Now the question is: how do you actually build it? Theory is useful. But when you sit down to create your technical documentation, you need a concrete process — not just a list of requirements. In this article, we walk through the entire documentation process step by step, using

cici BEL

Apr 2912 min read

Article 10 in Practice: How to Build Compliant Data Governance for High-Risk AI

Article 10 in Practice: How to Build Compliant Data Governance for High-Risk AI Article 10 tells you what compliant data governance looks like. But knowing the requirements and actually implementing them are two different things. How do you document design decisions in practice? How do you examine datasets for bias? How do you map your data to the stakeholders it affects? This guide answers those questions with a step-by-step methodology you can follow today. We'll use a runn

cici BEL

Apr 2312 min read

Article 9 EU AI Act: The Risk Management System Explained

180 pages. 113 articles. And if you're building a High-Risk AI system, Article 9 might be the most important one you need to understand. Why? Because Article 9 doesn't ask you to tick a box once. It requires you to build and maintain a Risk Management System (RMS) — a continuous, adaptive process that spans the entire lifecycle of your AI system. If Article 50 is about transparency (telling people they're interacting with AI), Article 9 is about responsibility: systematicall

jimsigne

Apr 78 min read

bottom of page