Article 10 in Practice: How to Build Compliant Data Governance for High-Risk AI

- cici BEL

- Apr 23

- 12 min read

Article 10 in Practice: How to Build Compliant Data Governance for High-Risk AI

Article 10 tells you what compliant data governance looks like. But knowing the requirements and actually implementing them are two different things. How do you document design decisions in practice? How do you examine datasets for bias? How do you map your data to the stakeholders it affects? This guide answers those questions with a step-by-step methodology you can follow today.

We'll use a running example throughout: TalentMatch AI, a fictional AI-powered Applicant Tracking System that screens job applications and ranks candidates. As a system listed in Annex III of the AI Act (employment and worker management), it's classified as high-risk — and Article 10 applies in full.

The Implementation Framework

Article 10 compliance isn't a one-time checklist — it's an ongoing process integrated into your AI lifecycle. We'll structure implementation around six practical steps that map directly to the legal requirements while following the methodology established in IEEE 7003-2024, the standard for algorithmic bias considerations.

Step 1: Stakeholder Identification — Who influences and who is impacted by your AI system?

Step 2: Data Inventory & Origin Documentation — Where does your data come from and how was it collected?

Step 3: Data Representation Mapping — How well does your data represent your identified stakeholders?

Step 4: Bias Analysis & Profiling — What biases exist and how do they affect different groups?

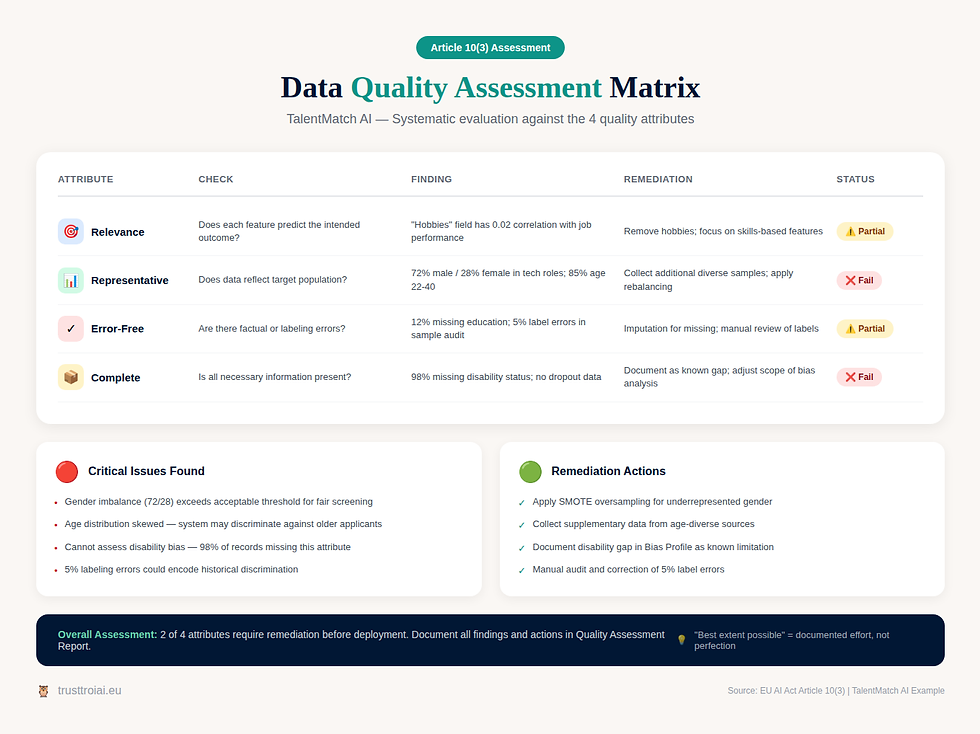

Step 5: Quality Assessment — Does your data meet the four quality attributes?

Step 6: Documentation & Continuous Monitoring — How do you prove compliance and catch drift?

Step 1: Stakeholder Identification

Before you can assess whether your data is representative, you need to know: representative of whom? IEEE 7003 Clause 6 provides the methodology. The goal is to identify all parties who influence the AI system (influencing stakeholders) and all parties who are impacted by its outputs (impacted stakeholders). Many stakeholders fall into both categories.

TalentMatch AI Example: For our ATS system, influencing stakeholders include the development team (data scientists, engineers), the HR department that configures screening criteria, the company leadership that sets hiring policies, and third-party data providers (if any). Impacted stakeholders include job applicants (directly affected by screening decisions), hiring managers (who receive filtered candidate lists), current employees (whose data may be used for "successful employee" profiles), and unsuccessful candidates (who may face discrimination if bias exists).

Identifying Attributes: For each stakeholder group, document relevant attributes. For job applicants, this includes demographic attributes (age, gender, ethnicity, disability status), professional attributes (education, experience, skills), and contextual attributes (geographic location, employment status). Some attributes are protected under anti-discrimination law — these require special attention. IEEE 7003 calls these "protected attributes" and requires documenting why they need protection and what measures you're taking.

Practical Output: Create a Stakeholder Reference Set that lists all stakeholder groups, classifies them as influencing/impacted/both, documents their relevant attributes, identifies which attributes are protected, and ranks stakeholders by priority for bias analysis. This becomes the foundation for everything that follows.

Step 2: Data Inventory & Origin Documentation

Article 10(2) requires documenting your data collection processes and origin. IEEE 7003 Section 7.4.2.1 provides a 17-point metadata checklist that operationalizes this requirement. Work through each question systematically.

TalentMatch AI Example — Data Sources: Our ATS uses three primary data sources.

First, historical application data containing 50,000 past applications with outcomes (hired/rejected), collected over 5 years from the company's previous ATS.

Second, employee performance data including performance reviews and tenure information for 2,000 current employees, extracted from the HR management system.

Third, job posting data with 500 job descriptions and their requirements, created by hiring managers.

Document for Each Data Source:

Where was it sourced? (Internal HR systems, previous vendor)

Why was this source chosen? (Historical availability, relevance to hiring outcomes)

What was the original collection purpose? (Operational HR management, not AI training)

Collection mode:

was it provided voluntarily? (Applicants submitted data; employees consented to performance tracking)

Was there an opt-out option? (No explicit opt-out for AI training use)

Jurisdiction:

was data imported from other countries? (Some applications from international candidates)

Is the data anonymized or pseudonymized? (Pseudonymized with candidate IDs)

Red Flag Check: Our historical data was collected when AI screening wasn't the intended purpose. This means GDPR purpose limitation may require updated consent or a legitimate interest assessment. Document this gap and your remediation plan.

Step 3: Data Representation Mapping

This is where stakeholder identification meets data analysis. The question: does your data adequately represent all identified stakeholder groups? IEEE 7003 Section 7.4.2.3 calls this "mapping data for the bias profile."

TalentMatch AI Example — Representation Analysis: We map our historical application data against the stakeholder attributes identified in Step 1. The analysis reveals several imbalances:

Gender: 72% male, 28% female applicants in technical roles — underrepresentation of women.

Age: 85% of applicants aged 22-40, only 15% over 40 — underrepresentation of older workers.

Disability: Only 2% of applicants disclosed disability status — likely underreporting due to stigma concerns.

Education: 60% from top-20 universities — potential socioeconomic bias proxy.

Geography: 90% from major metropolitan areas — rural candidates underrepresented.

Document Imbalances: Each imbalance needs documentation. For gender imbalance, you document: the observed ratio (72/28), the expected ratio (e.g., 50/50 for gender-neutral roles, or industry benchmark), the magnitude of underrepresentation, and the potential impact (system may learn to prefer male candidates if historical hiring was biased).

Identify Proxy Variables: This is critical. A proxy variable is a feature that appears neutral but correlates with a protected attribute. In our example: University name may proxy for socioeconomic status and, indirectly, for ethnicity. Postal code of current residence may proxy for ethnicity and income level. Years since graduation directly reveals age. Gaps in employment history may correlate with gender (parental leave) or disability. Document all identified proxies and your strategy for handling them.

Step 4: Bias Analysis & Profiling

Article 10(2) requires examining datasets for biases that could harm health and safety, negatively affect fundamental rights, or result in discrimination. IEEE 7003 structures this through the "Bias Profile" — a living document that captures bias considerations throughout the AI lifecycle.

Three Types of Bias to Analyze: Historical bias exists in the training data because it reflects past discrimination. If your company historically preferred certain demographics, that pattern is encoded in the data. Representation bias exists when certain groups are underrepresented in training data, causing the model to perform worse for them. Measurement bias exists when the features used don't measure the same thing across groups — for example, if "culture fit" scores were assigned by interviewers with unconscious bias.

TalentMatch AI Example — Bias Analysis: We conduct quantitative analysis on our historical data. Outcome analysis shows that among equally qualified candidates (same education level, same years of experience), male candidates were hired at 1.4x the rate of female candidates. This suggests historical gender bias. Correlation analysis reveals that university prestige correlates 0.7 with hiring outcome but only 0.3 with subsequent job performance — suggesting it's a poor predictor inflated by interviewer bias. Feedback loop risk exists because if the model learns from biased historical decisions, it will perpetuate them in future screening.

Create the Bias Profile: Document each identified bias with: description (what is the bias?), affected stakeholders (who does it impact?), severity assessment (how significant is the impact?), root cause (where does it originate?), and mitigation strategy (how will you address it?). The bias profile is updated throughout the AI lifecycle — it's not a one-time document.

Mitigation Strategies: For TalentMatch AI, we implement several mitigations. We remove or de-weight university name as a feature since it's a poor performance predictor and a socioeconomic proxy. We apply rebalancing techniques to correct for historical gender bias in outcomes. We exclude postal code entirely since it proxies for protected attributes without predictive value. We add diverse validation data by collecting additional applications from underrepresented groups for validation testing.

Step 5: Quality Assessment

Article 10(3) requires that datasets are relevant, representative, error-free (to the best extent possible), and complete. Assess each attribute systematically.

Relevance Assessment: For each feature in your dataset, ask: does this feature help predict the outcome we care about? For TalentMatch AI, relevant features include skills mentioned in resume (directly relevant to job requirements), years of experience in relevant field (predictive of capability), and specific certifications (relevant for technical roles). Features with questionable relevance include hobbies and interests (rarely predictive), photo or personal appearance data (irrelevant and potentially discriminatory), and social media activity (privacy concerns, unclear relevance).

Representativeness Assessment: Compare your data distribution against the target population distribution. For TalentMatch AI, if the system will screen applications from across Europe, but training data is 80% from Germany, the data isn't representative of the deployment context. This directly triggers Article 10(4) — contextualization requirements. Document gaps and your plan to address them (collect additional data, apply domain adaptation techniques, limit deployment scope).

Error-Freeness Assessment: Conduct data quality checks. For our ATS, we check for missing values (12% of records missing education details — significant gap), inconsistent formatting (dates in multiple formats — needs standardization), duplicate records (3% duplicates found — need deduplication), and labeling errors (sample audit found 5% of hire/reject labels were incorrect — needs correction). Document all errors found, their magnitude, and your remediation actions. Remember: perfection isn't required, but documented quality assurance is.

Completeness Assessment: Is all necessary information present? For TalentMatch AI, we're missing disability status for 98% of applicants — we cannot assess bias for this protected group without data. We have no data on candidates who started the application but didn't complete it — potential selection bias. We lack rejection reason documentation — we can't distinguish between "unqualified" and "qualified but not selected."

Step 6: Documentation & Continuous Monitoring

Article 10 creates a best-efforts obligation — "obligation de moyens." Proving your efforts requires comprehensive, traceable documentation. IEEE 7003 structures this through the Bias Profile repository, which contains outputs from all previous steps.

Required Documentation: Your data governance documentation should include: Stakeholder Reference Set with all identified stakeholders, their attributes, and protected attribute designations.

Data Origin Documentation for each data source with the 17-point metadata checklist completed.

Representation Mapping showing how data maps to stakeholder attributes with documented imbalances.

Bias Profile documenting all identified biases, their severity, and mitigation strategies.

Quality Assessment Report covering all four attributes with findings and remediation.

Decision Log explaining why certain features were included/excluded, why certain data sources were chosen, and what tradeoffs were made.

Continuous Monitoring: Article 10 compliance isn't a one-time certification. IEEE 7003 Section 9.3 addresses ongoing evaluation. Monitor for

data drift — when production data characteristics change from training data (e.g., applicant pool demographics shift). Monitor for

concept drift — when the relationship between inputs and outcomes changes (e.g., skills that predicted success in 2020 may not predict success in 2025). Monitor for

feedback loops — when system outputs influence future inputs, potentially amplifying bias over time.

TalentMatch AI Monitoring Plan: We implement quarterly bias audits comparing screening outcomes across demographic groups. We track monthly data distribution reports comparing new applications to training data distributions. We establish automatic alerts when hiring rate disparities exceed defined thresholds. And we conduct annual stakeholder reviews to reassess whether stakeholder identification remains complete.

Practical Examples from Different Domains

The framework above applies to any high-risk AI system. Here's how it manifests in different contexts.

Medical Diagnosis AI: Stakeholders include patients (impacted by diagnosis accuracy), clinicians (who act on AI recommendations), and hospital administrators. Key bias risks include training data from US hospitals that may not represent European patient populations, underrepresentation of rare diseases, and demographic bias if training data skewed toward certain ethnic groups. Article 10(4) contextualization is critical — a system trained on US data requires German validation data before German deployment.

Credit Scoring AI: Stakeholders include loan applicants (directly impacted by credit decisions), lending institutions, and regulators. Key bias risks involve historical lending discrimination encoded in training data, proxy variables like postal code correlating with ethnicity, and feedback loops where denied applicants never generate positive repayment data. Article 10(5) may apply — if you need ethnicity data to detect bias, you must follow the cascade principle and six cumulative conditions.

NLP-Based Content Moderation: Stakeholders include content creators (whose posts are evaluated), platform users (who see moderated content), and the platform itself. Key bias risks include training data scraped from public sources that reflect societal biases, language model bias that performs differently across dialects or languages, and cultural bias where content acceptable in one culture is flagged based on another culture's norms. Proxy risk is high — certain linguistic patterns may correlate with demographic groups.

Common Implementation Mistakes

Based on industry practice and regulatory guidance, these are the pitfalls to avoid.

Mistake 1: Skipping Stakeholder Identification — Teams often jump directly to data analysis without first identifying who is affected. Result: you can't assess representativeness without knowing "representative of whom." Fix: always start with stakeholder identification, even if it seems obvious.

Mistake 2: Ignoring Proxy Variables — Removing protected attributes like gender or ethnicity from features doesn't eliminate bias if correlated proxies remain. Postal code, university name, and employment gaps can all encode protected information. Fix: conduct correlation analysis between all features and protected attributes; address proxies, not just direct attributes.

Mistake 3: Assuming Synthetic Data is Bias-Free — Synthetic data generated from biased real data will inherit that bias. The generation algorithm itself can introduce new biases. Fix: analyze synthetic data for bias just as rigorously as real data; document the generation process.

Mistake 4: One-Time Compliance Mindset — Treating Article 10 as a checkbox exercise rather than an ongoing process. Data drifts, populations change, and system behavior evolves. Fix: implement continuous monitoring; schedule regular bias audits; update documentation as the system changes.

Mistake 5: Documentation Gaps — Doing the right things but failing to document them. Under "obligation de moyens," your documentation is your proof. Fix: document decisions as you make them; maintain a decision log; version control all artifacts.

Special Case: Article 10(5) — Using Sensitive Data

Sometimes you need data about protected attributes (ethnicity, health status, etc.) specifically to detect and correct bias. Article 10(5) permits this — but only under strict conditions.

TalentMatch AI Example: Our bias analysis reveals potential ethnic bias, but we have no ethnicity data to confirm or quantify it. Can we collect this data? Following the cascade principle: First, try synthetic data — we generate synthetic ethnicity labels based on name analysis. Problem: name-based inference is unreliable and itself potentially discriminatory. Second, try anonymized data — we seek anonymized benchmark datasets with known ethnicity distributions. Problem: no suitable dataset matches our applicant population. Third, actual sensitive data — as a last resort, we consider voluntary ethnicity disclosure for bias analysis only.

If You Proceed with Sensitive Data: All six conditions must be met. Document that synthetic and anonymized alternatives were insufficient (condition a). Implement technical measures preventing reuse — the data is stored separately, encrypted, with no connection to individual applications (condition b). Restrict access to two named data scientists with signed confidentiality agreements (condition c). Ensure no third parties ever access the data (condition d). Establish automatic deletion 30 days after bias analysis completion (condition e). Update GDPR Article 30 records with full justification (condition f).

Coordinate with GDPR: Conduct a joint assessment combining DPIA (GDPR Article 35) with FRIA (AI Act Article 27). This ensures you've covered both data protection and fundamental rights impacts without duplication.

How TrustTroiAI Helps

Implementing Article 10 requires systematic process execution across all six steps — and maintaining that process over time. TrustTroiAI provides structured support at each stage.

Template 10: Data Governance — Our guided workflow walks you through stakeholder identification with structured prompts for influencing and impacted stakeholders. It includes the full IEEE 7003 metadata checklist for data origin documentation. The representation mapping tool helps you compare your data distribution against stakeholder attributes. The bias profiling framework structures your analysis with severity scoring and mitigation tracking. The quality assessment covers all four attributes with findings documentation. And version-controlled documentation ensures every decision is traceable.

Integration with Other Templates: Template 10 connects to Template 9 (Risk Management) because data biases are risk sources that feed into your Article 9 RMS. It connects to Template 27 (FRIA) for fundamental rights impact assessment coordination. And it connects to your GDPR documentation for joint DPIA+FRIA assessments.

Finn (Context Assistant provides situation-specific guidance when you encounter edge cases — like determining whether your data adequately represents a deployment context, or assessing whether a proxy variable should be removed or de-weighted.

Data governance isn't just compliance — it's the foundation of trustworthy AI. Start your Article 10 assessment at trusttroiai.com and build data governance that proves you did it right.Key Takeaways on Article 10 Compliance

Article 10 implementation follows six practical steps: stakeholder identification, data inventory, representation mapping, bias analysis, quality assessment, and documentation with continuous monitoring. IEEE 7003-2024 provides the operational methodology — particularly the 17-point metadata checklist and bias profile framework. TalentMatch AI illustrates how these requirements manifest for an employment-related high-risk system.

Proxy variables are often more dangerous than direct protected attributes — postal codes, university names, and employment gaps can all encode protected information. Synthetic data is not automatically bias-free — it inherits bias from source data and can introduce new bias through the generation process. Documentation is your proof — under "obligation de moyens," what you can demonstrate matters as much as what you did. And Article 10(5) creates a narrow pathway for sensitive data when needed for bias detection — but only after exhausting alternatives and meeting all six cumulative conditions.

Data governance is where AI compliance becomes tangible. The code, the models, the outputs — they all flow from the data. Get Article 10 right, and you've built the foundation for everything else.

Source

[1] Regulation (EU) 2024/1689 — Artificial Intelligence Act

Article 10 (Data Governance)

[2] IEEE 7003-2024

[3] The Academic Guide to AI Act Compliance

hal-05365570v1

[4] CEN-CENELEC JTC 21

Joint Technical Committee on Artificial Intelligence

Developing harmonised standards for AI Act compliance

[5] TrustTroiAI

"The AI Act Framework: 4 Steps to Clarity"

Comments